Pentagon dumps millions on mind-control project

The Pentagon’s research arm — the Defense Advanced Research Projects Agency, or DARPA — has just been given $65 million of American taxpayer money to develop a direct link between a human brain and a computer.

Published: July 26, 2017, 10:36 am

The year 2017 is shaping up to be the one in which mind-controlled computing research gathers momentum.

According to DARPA, the project will aim to find a way to “enable rich two-way communication with the brain at a scale that will help deepen our understanding of that organ’s underlying biology, complexity, and function”. If successful, the Neural Engineering System Design (NESD) will “support potential future therapies for sensory restoration”.

So manipulating human brains, altering senses, including “vision, hearing, and speech” is on the cards and it sound particularly scary. DARPA says it wants to create “an implantable package”, which is a device that can be put directly into the brains of those selected for the sensory rewiring. One of the proposed interfaces will be the development of “up to 100,000 untethered, submillimeter-sized ‘neurograin’ sensors implanted onto or into the cerebral cortex.”

Once the device is implanted in the brain, a “relay station transceiver worn on the head” to “wirelessly power and communicate with the implanted device”. It certainly adds a whole new dimension to “hearing voices in your head”.

A team from the University of California-Berkley is attempting “to create quantitative encoding models to predict the responses of neurons to external visual and tactile stimuli, and then apply those predictions to structure photo-stimulation patterns that elicit sensory percepts in the visual or somatosensory cortices, where the device could replace lost vision or serve as a brain-machine interface for control of an artificial limb”.

“Predict the responses of neurons” means the Pentagon-controlled brain will send messages to the controllers alerting them to thoughts or actions about enter the person’s conscious mind.

Some academics voiced their support of the project. A paper entitled “The Brain Activity Map Project and the Challenge of Functional Connectomics” includes predictions of the development of “techniques for wireless, noninvasive readout of the activity of neuronal populations”. This research would allow those in control of the project to wirelessly access and control the brains of target populations.

An excerpt from the academic study notes: “This emergent level of understanding could also enable accurate diagnosis and restoration of normal patterns of activity to injured or diseased brains, foster the development of broader biomedical and environmental applications, and even potentially generate a host of associated economic benefits.”

As revealed in the academic study, the NESD is part of a broader initiative called the BRAIN Initiative — short for Brain Research through Advancing Innovative Neurotechnologies. All the projects are broadly interested in finding ways to decode neural data which would aid in finding techniques to artificially manipulate humans.

The financial magazine Forbes reported that DARPA employs scientists at Carnegie Mellon University to develop “an artificial intelligence system that can watch and predict what a person will ‘likely’ do in the future using specially programmed software designed to analyze various real-time video surveillance feeds. The system can automatically identify and notify officials if it detects “anomalous behaviors” suggesting a surveillance world such as the one portrayed in the movie Minority Report.

In its announcement, DARPA named “Detection and Computational Analysis of Psychological Signals (DCAPS)” as one of its primary areas of focus in its BRAIN activity. A separate entry on another part of the DARPA website reveals more about DCAPS and how it could be used:

“DCAPS tools will be developed to analyze patterns in everyday behaviors to detect subtle changes associated with post-traumatic stress disorder, depression and suicidal ideation. In particular, DCAPS hopes to advance the state-of-the-art in extraction and analysis of “honest signals” from a wide variety of sensory data inherent in daily social interactions. DCAPS is not aimed at providing an exact diagnosis, but at providing a general metric of psychological health.

“DCAPS also aims to develop novel algorithms for detecting distress cues from users who opt in to provide data such as text and voice communications, daily patterns of sleeping, eating, social interactions and online behaviors, and nonverbal cues such as facial expression, posture and body movement. The outcomes of these analytical algorithms would be correlated with distress markers from neurological sensors for improved understanding of distress cues.”

Meanwhile, a team from Fondation Voir et Entendre will try to link an artificial retina worn over the eyes with neurons in the visial cortex using optogenetics. In a similar vein, the John B. Pierce Laboratory team aims to create an all-optical prosthesis for the visual cortex using neurons modified to bioluminesce and respond to optogenetic stimulation.

After the first year, the program will move into Phase II, which will look towards human experiments.

All rights reserved. You have permission to quote freely from the articles provided that the source (www.freewestmedia.com) is given. Photos may not be used without our consent.

Consider donating to support our work

Help us to produce more articles like this. FreeWestMedia is depending on donations from our readers to keep going. With your help, we expose the mainstream fake news agenda.

Keep your language polite. Readers from many different countries visit and contribute to Free West Media and we must therefore obey the rules in, for example, Germany. Illegal content will be deleted.

If you have been approved to post comments without preview from FWM, you are responsible for violations of any law. This means that FWM may be forced to cooperate with authorities in a possible crime investigation.

If your comments are subject to preview by FWM, please be patient. We continually review comments but depending on the time of day it can take up to several hours before your comment is reviewed.

We reserve the right to delete comments that are offensive, contain slander or foul language, or are irrelevant to the discussion.

Ohio disaster: When hedge funds manage rail traffic

East PalestineAfter the derailment of a freight train loaded with highly toxic chemicals in the US state of Ohio, a devastating environmental catastrophe may now be imminent. The wagons burned for days, and a "controlled" explosion by the authorities released dangerous gases into the environment.

US President Biden orders ‘spy’ balloon to be shot down

WashingtonThe US President gave the order to shoot down China's "spy balloon". The balloon had caused US Secretary of State Blinken to cancel a trip to Beijing. In the meantime, a second balloon was sighted.

US is heading for a financial ‘catastrophe’ US Treasury Secretary warns

WashingtonOn January 19, 2023, the United States hit its debt ceiling of $31.4 trillion. The country faces a recession if it defaults on its debt, the US Treasury Secretary warned in an interview. Her warning underscored the danger of printing money.

Gun violence: More risk in Chicago and Philadelphia than Iraq, Afghanistan

Providence, Rhode IslandA striking statistic: young Americans are several times more likely to be injured by a gun in cities like Chicago and Philadelphia than they are while serving as a soldier in a foreign country.

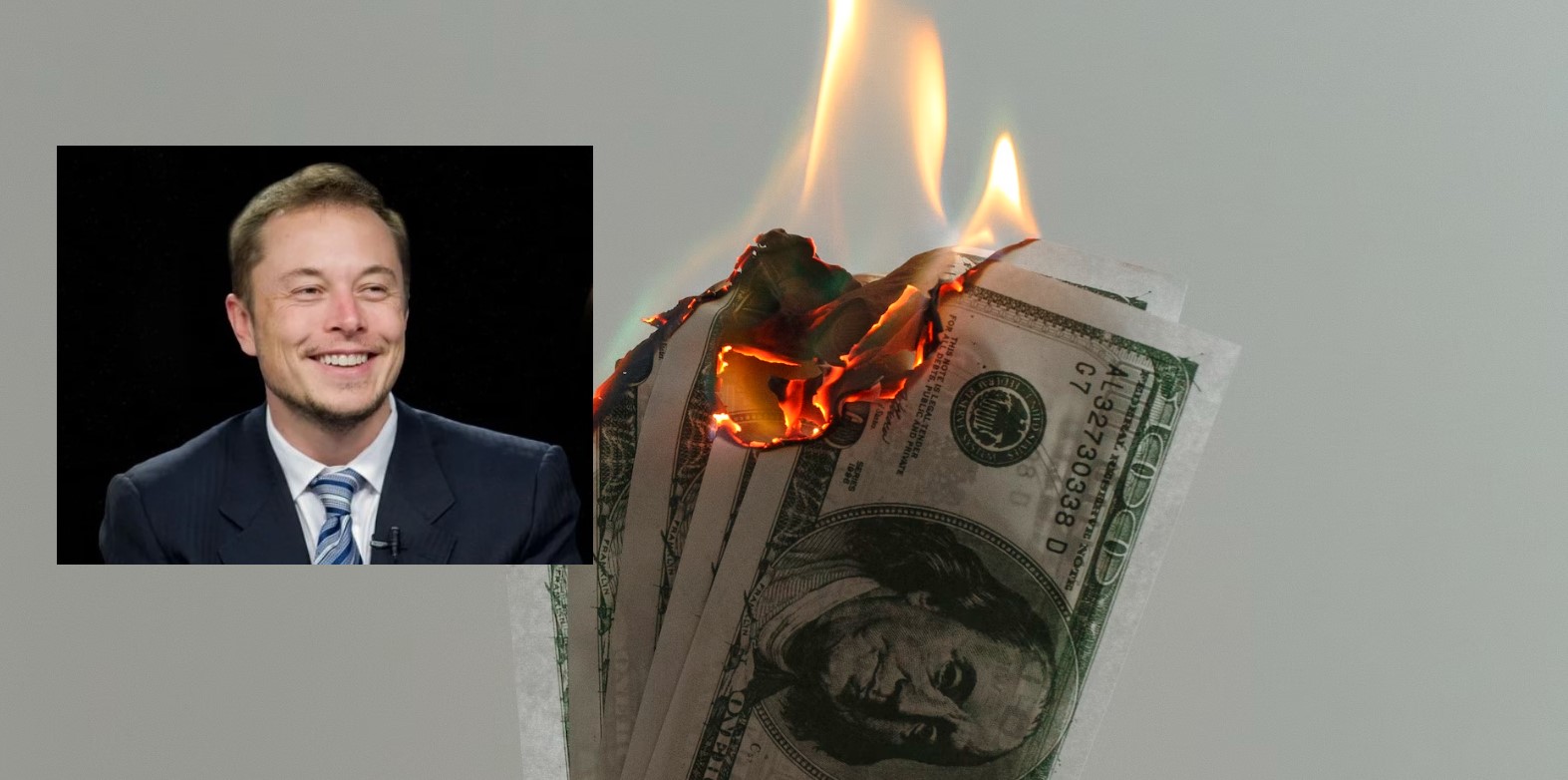

Elon Musk, the first person in history to destroy $200 billion in a year

Never before in human history has a person lost as much money in one year as Elon Musk did in 2022. The Tesla and Twitter boss lost $200 billion last year. However, with his remaining $137 billion, he is still the second richest person in the world.

Extreme cold and winter storms sweep across US

More than a million households without electricity, thousands of canceled flights, temperatures in the double-digit minus range and already 41 fatalities: The US is being overwhelmed by an enormous cold wave.

Soros sponsors violent leftists and anti-police lobby as US crime surges

WashingtonThe mega-speculator and "philanthropist" George Soros remains true to himself – he has been sponsoring anti-police left-wing groups with billions of dollars.

FTX Founder Sam Bankman-Fried arrested after crypto billions go missing

NassauHe is no longer sitting in his fancy penthouse, but in a cell in the Bahamas: Sam Bankman-Fried (30), founder of the crypto company FTX, is said to be responsible for the theft of 37 billion euros. An interesting fact is that media in the EU have so far kept this crime thriller almost completely secret.

How Twitter helped Biden win the US presidency

WashingtonThe short message service Twitter massively influenced the US presidential election campaign two years ago in favor of the then candidate Joe Biden. The then incumbent Donald Trump ultimately lost the election. Internal e-mails that the new owner, Elon Musk, has now published on the short message service show how censorship worked on Twitter. The 51-year-old called it the “Twitter files”.

Alberta PM suspends cooperation with WEF

EdmontonThe newly elected Premier Danielle Smith of the province of Alberta in Canada has recently made several powerful statements against the globalist foundation World Economic Forum and its leader Klaus Schwab. She has also decided to cancel a strange consulting agreement that WEF had with the state.